Zephyr Project at 10: Open Source RTOS Adoption, Maturity, and Ecosystem Growth Zephyr ProjectMay 29, 2026

Zephyr ProjectMay 29, 2026

Zephyr Project at 10: Open Source RTOS Adoption, Maturity, and Ecosystem Growth

Over the past decade, Zephyr has evolved from an emerging real-time operating system into a foundational platform for modern embedded development. A new Linux Foundation Research report, Zephyr® Turns 10:…

Beep Boop, Fastboot — Zephyr Podcast #037Blog Benjamin CabéMay 29, 2026

Benjamin CabéMay 29, 2026

Beep Boop, Fastboot — Zephyr Podcast #037

The Zephyrtastic Episode — Zephyr Podcast #036Blog Benjamin CabéMay 22, 2026

Benjamin CabéMay 22, 2026

The Zephyrtastic Episode — Zephyr Podcast #036

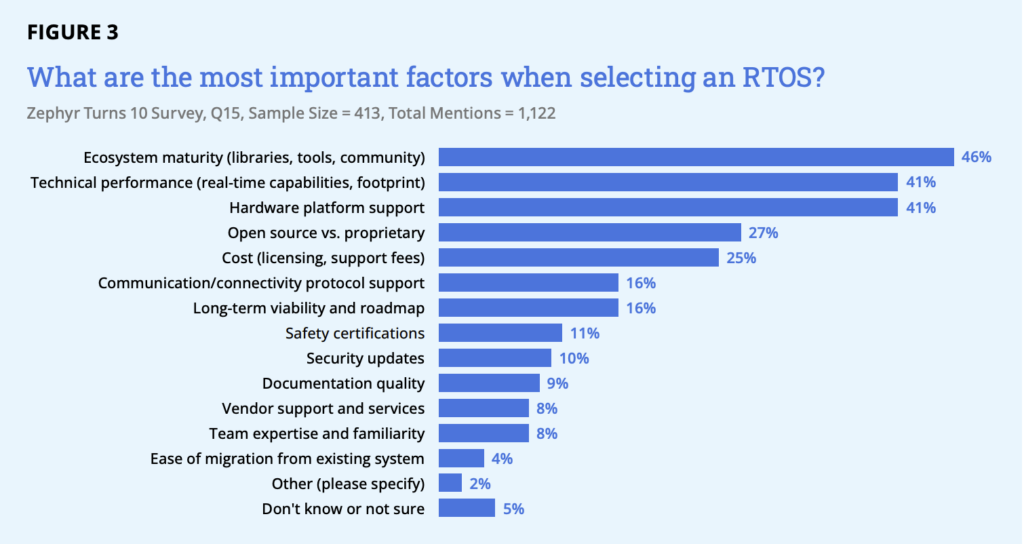

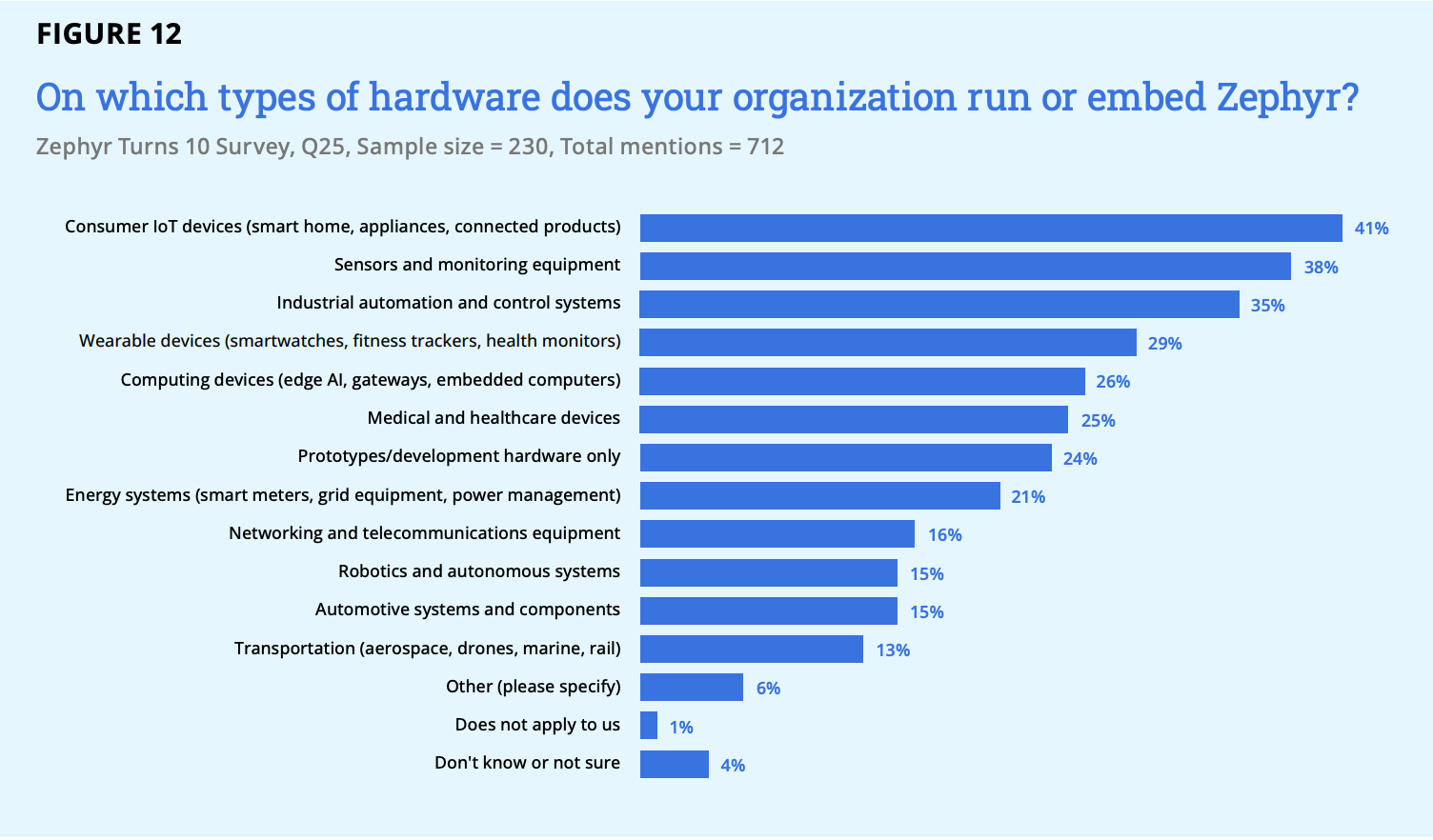

How Organizations Choose and Deploy RTOS

How Organizations Choose and Deploy RTOS

May 21, 2026Recap – Zephyr Project meetup (March 26, 2026): Rennes, France

Recap – Zephyr Project meetup (March 26, 2026): Rennes, France

May 20, 2026Zephyr Insights: Code Footprint

Zephyr Insights: Code Footprint

May 14, 2026Zephyr Project at Open Source Summit: Mumbai, India 2026